|

9/6/2023 0 Comments Entropy is a measure of

This can have serious consequences for security, which we’ll see below. In other words, using a biased random number generator reduces the entropy of the source from 128 bits to around 60 bits. We’re not saying that data is really just randomly chosen, but this is a good model that has proved its usefulness over time.ĭefinition: A source is an ordered pair \(\right) How are messages selected? We take the source to be probabilistic. For now we focus just on how messages are selected, not how they are encoded. The question of encoding is very involved, and we can encode for the most compact representation (data compression), for the most reliable transmission (error detection/correction coding), to make the information unintelligible to an eavesdropper (encryption), or perhaps with other goals in mind. We consider an information source as having access to a set of possible messages, from which it selects a message, encodes it somehow, and transmits it across a communication channel. To talk precisely about the information content in messages, we first need a mathematical model that describes how information is transmitted.

In honor of his work, this use of “entropy” is sometimes called “Shannon entropy.” Entropy - Basic Model and Some Intuition The fundamental question of how information is represented is a common and deep thread connecting issues of communication, data compression, and cryptography, and information theory is a key component of all three of these areas. Information theory grew out of the initial work of Claude Shannon at Bell Labs in the 1940’s, where he also did some significant work on the science of cryptography. In settings that deal with computer and communication systems, entropy refers to its meaning in the field of information theory, where entropy has a very precise mathematical definition measuring randomness and uncertainty.

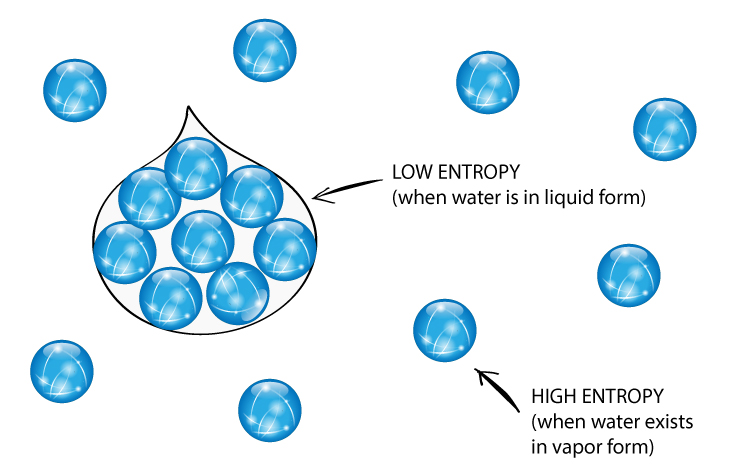

In general English usage, entropy is used as a less precise term but still refers to disorder or randomness. For example, in physics the term is a measure of a system’s thermal energy, which is related to the level of random motion of molecules and hence is a measure of disorder. So, entropy is very less in case of solids when compared to liquids and gases.The word entropy is used in several different ways in English, but it always refers to some notion of randomness, disorder, or uncertainty. Note: In solid state atoms are closely packed in equilibrium position in the lattices of the crystal gives more proper arrangement means very less disorder. Therefore the entropy is a measure of randomness or disorder in a system. Automatically the entropy also increases when a solid is going to convert into liquid and a liquid is going to convert into vapor. Because the rate of disorder is increasing from solid to liquid and liquid to vapor. The change of the state of the chemical is going to depend on the amount of absorption of heat. If the liquid is going to change into vapor then entropy increases more. If the state of a chemical changes from solid to liquid then the randomness in the system increases means entropy also increases. Means entropy and disorder are directly proportional to each other. If the disorder is high then the entropy is also high. The disorder is nothing but randomness in a system can be measured by using entropy. In the given question it is asked about entropy. Entropy is used to measure the thermal energy of the system. Hint: The concept of entropy was introduced by Clausius in 1850.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed